The Three Ways in the AI Era -- Part 1

The DevOps Handbook Turns 10. AI Just Broke Half of It.

The DevOps Handbook is 10 years old this year. Most teams never really lived its principles. Now AI promises to finally unlock them — and instead, it’s making things worse.

This is the first article in a 6-part series. We will look at Gene Kim’s Three Ways through the lens of AI-assisted development. And we will be honest: AI is not a magic fix. Without structure, it breaks DevOps harder than anything else I have seen in 15 years of enterprise work.

PROBLEM

Let me describe a team I worked with some time ago.

They were a solid .NET + React shop on Azure Kubernetes Service, shipping through Azure DevOps. Classic setup. Their DORA metrics were OK: deployment frequency daily, lead time around two days, change failure rate around 10%.

Then they rolled out GitHub Copilot and an AI code review bot across the org. Everyone was excited.

Six months later:

- Deployment frequency went up (3x). Good.

- Lead time doubled. Bad.

- Change failure rate tripled. Very bad.

- Code reviewers started ignoring the AI bot because 80% of its comments were false alarms.

- Two junior devs merged a Kubernetes manifest they could not explain when production broke.

These are the kind of numbers that show up in the State of DevOps Report for teams with high AI adoption but low process discipline. The report calls it out plainly: AI is not a substitute for good engineering practices. It amplifies whatever you already do — good or bad.

If your team is shipping more code but feeling slower, this article is for you.

SOLUTION

Let’s go back to basics. Gene Kim, Jez Humble, Patrick Debois, and John Willis wrote the 1st edition of the DevOps Handbook in 2016. The 2nd edition came in 2021, with a short mention of machine learning — but this was before ChatGPT existed. The book is still the best summary of why DevOps works, and it is built on three ideas called The Three Ways.

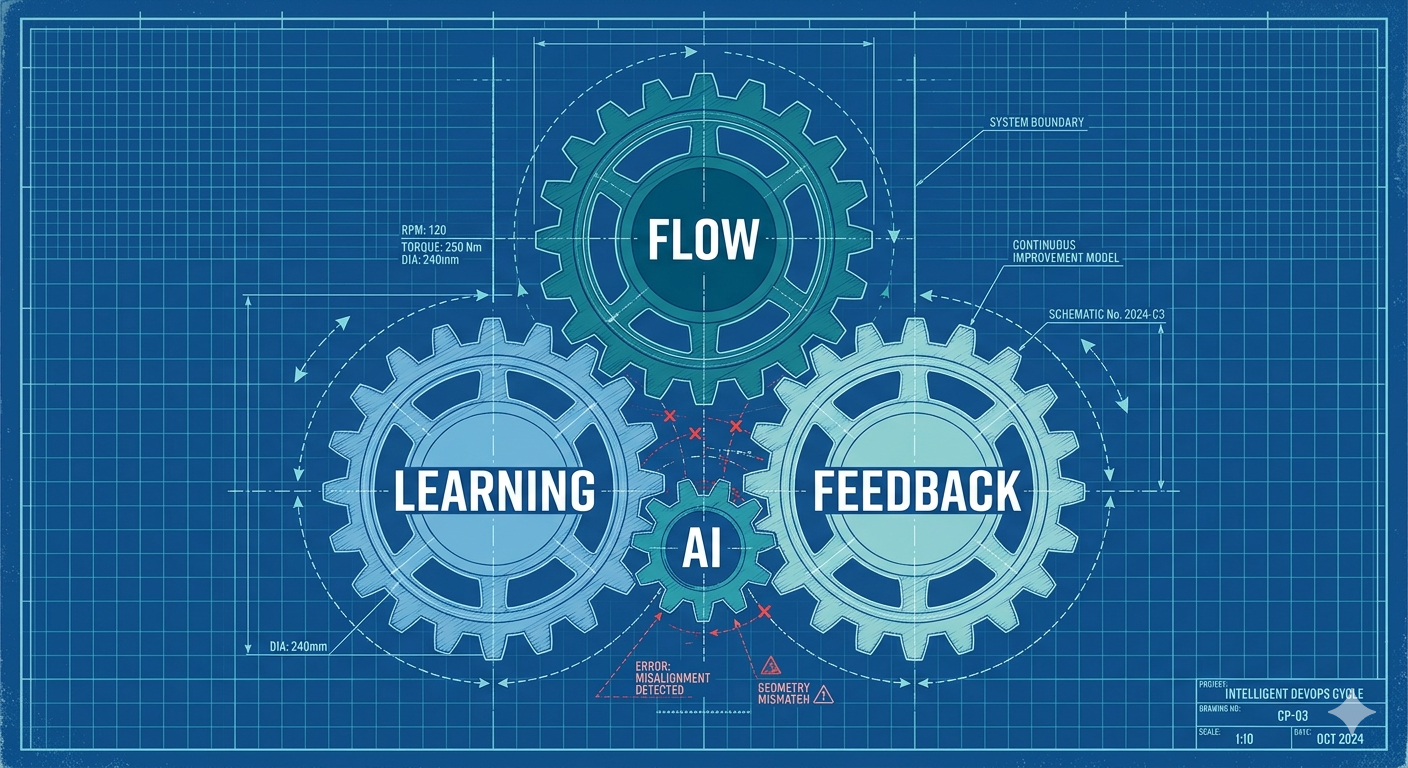

First Way: Flow

Work should move smoothly from development to production. Small batches. Visible work. No big handoffs. You should be able to push a tiny change to prod with confidence.

Second Way: Feedback

Problems should be detected fast and sent back upstream. Telemetry everywhere. Fast tests. Code review that catches real issues, not style nits. If something breaks in prod, the team that wrote it hears about it first.

Third Way: Continuous Learning

The team gets better over time. Postmortems without blame. Safe experiments. Reading, training, sharing knowledge. Technical debt gets paid down, not just piled up.

Here is the thesis of this series:

AI does not automatically support The Three Ways. Without structure, AI breaks all three: bigger batches, noisier feedback, and less learning.

This is not an anti-AI post. I use AI every day. But the teams that win with AI are the ones using structured approaches. We will look at three of them in this series:

| Framework | What it is | Where it helps |

|---|---|---|

| SDD (Spec-Driven Development) | Write a spec first. AI builds from the spec, not from vague prompts. | First Way (Flow). Keeps batches small and verifiable. |

| ATLAS | Human checklist for the whole lifecycle: Architect, Trace, Link, Assemble, Stress-test. | All Three Ways. Keeps the human in the loop. |

| GOTCHA | Prompt structure: Goals, Orchestration, Tools, Context, Heuristics, Args. | Second Way (Feedback). Makes AI output useful instead of noisy. |

SDD has real industry traction right now. GitHub Spec Kit and AWS Kiro are both built on the idea that the spec is the source of truth, not the code. ATLAS and GOTCHA are frameworks I apply in my own work. Together, they cover what SDD alone does not: the DevOps lifecycle, and the prompt itself.

More on each in the next articles. For now, let’s see what happens when a team uses none of them.

EXECUTE

Meet our example team. They need to integrate QuantumAPI — a post-quantum encryption service — into their existing .NET/React application on AKS, before a compliance audit in 8 weeks.

Nothing crazy. They want to:

- Encrypt sensitive fields at rest using QuantumAPI’s vault.

- Sign API responses using ML-DSA (a post-quantum signature algorithm).

- Rotate keys automatically every 90 days.

We will follow this same team across the whole series. Here is what happened when they tried to do this with AI, but without any of the three frameworks.

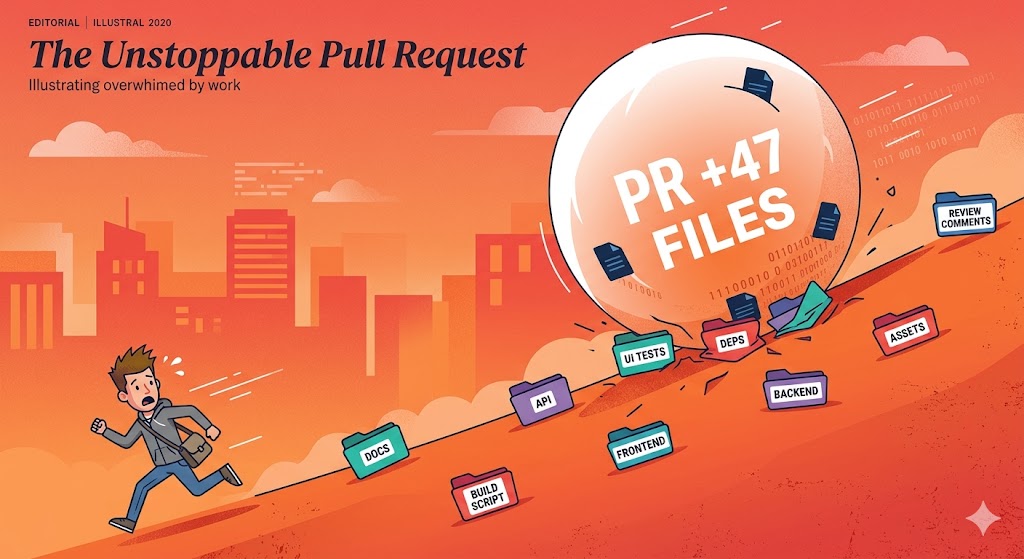

Scene 1 — The First Way breaks

A senior dev opens Copilot Chat and types:

“Integrate QuantumAPI into our API and frontend. We need encryption at rest and signed responses.”

45 minutes later, the AI has produced a pull request with:

- 47 files changed

- Changes to authentication middleware (not asked for)

- New retry logic in 12 controllers (not asked for)

- A new

QuantumApiClientclass with 340 lines - Frontend changes to 8 React components

- A new Docker base image

The reviewer opens the PR. Scrolls for 20 seconds. Closes the tab.

// From the generated PR — one of the 47 files

public class PortfolioController : ControllerBase

{

private readonly IQuantumApiClient _quantum;

private readonly IAuthMiddleware _auth; // Why is auth here?

private readonly IRetryPolicy _retry; // Why is retry here?

private readonly ILogger _logger;

public async Task<IActionResult> GetPortfolio(int id)

{

// 180 lines of generated logic, touching 4 concerns

}

}Batch size went from 3 files (their usual manual PR) to 47 files. This is the First Way broken. The team is not moving faster — they are creating an unreviewable blob.

Metric: lead time for this PR = 6 days. The team’s average before AI = 1.5 days.

Scene 2 — The Second Way breaks

The PR finally gets reviewed. Their AI code review bot leaves 22 comments:

- “Potential SQL injection” (on a method that uses EF Core parameterised queries — false positive)

- “Missing null check” (on a non-nullable type — false positive)

- “Use of deprecated algorithm” (on

ML-KEM-768, which is the current standard — the bot does not know PQC) - “Unsafe string concatenation” (on a log message — false positive)

- …and 18 more

Of 22 comments, 3 are real issues. 19 are noise.

[AI Review] Line 142: "Use of deprecated algorithm ML-KEM-768.

Consider migrating to AES-256."

-- Developer response: "ML-KEM-768 is post-quantum. AES-256

is not. The bot is wrong. Ignore."After two more PRs like this, the team stops reading AI review comments. This is the Second Way broken. Feedback is there, but the signal-to-noise ratio has collapsed.

Metric: comments acted on = 14% (down from 78% for human reviewers).

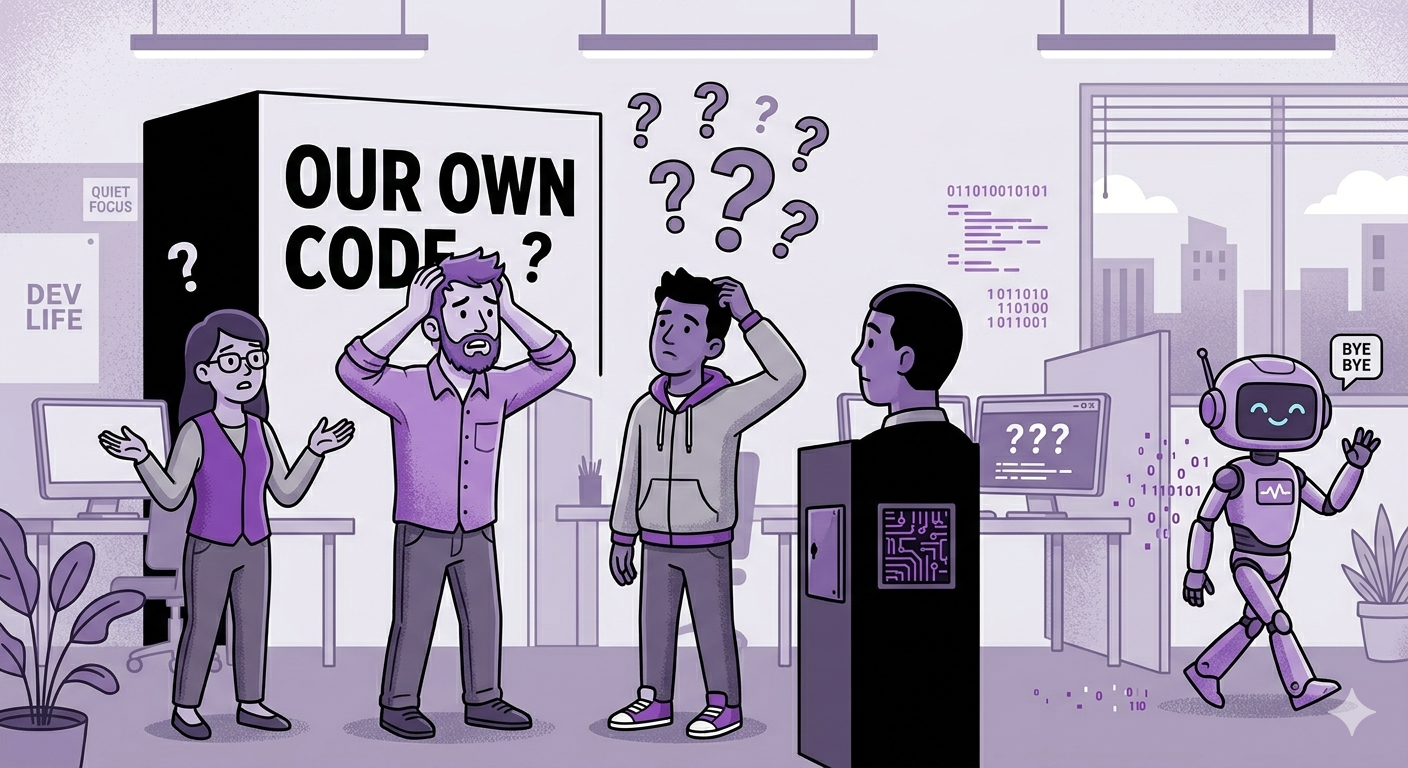

Scene 3 — The Third Way breaks

The PR merges. Everything works. Audit passes.

Three weeks later, production goes down. Key rotation has failed silently. The automated job was supposed to rotate the ML-KEM keypair every 90 days, but it crashed on the 8th day.

The on-call engineer looks at the code. It was written by Copilot. Nobody on the team can explain:

- Why

ML-KEM-768and notML-KEM-1024? - What the

RotateKeysmethod does between line 45 and line 120? - Why there is a retry policy with exponential backoff but no circuit breaker?

The original dev who “wrote” it shrugs. “Copilot suggested it. It looked right.”

This is the Third Way broken. The code exists, but the knowledge does not. The team shipped, but did not learn.

Metric: bus factor for the QuantumAPI integration = 0.

What this team will do next

In the next 5 articles, we will fix each of these scenes.

- Article 2: the team adopts SDD. The 47-file PR becomes three 5-file PRs, each with a clear spec.

- Article 3: the team applies GOTCHA to their AI reviewer. The 22 comments become 4 real issues.

- Article 4: the team uses AI as a tutor, not just a generator. The dev actually learns what ML-KEM-768 is.

- Article 5: we compare SDD, ATLAS, and GOTCHA honestly. None of them is a silver bullet.

- Article 6: we build the full playbook with DORA and AI-specific metrics.

TEMPLATE

Before the next article, run this self-assessment on your own team. It is a short checklist, 15 questions across the Three Ways. Score each question 0-2:

First Way (Flow):

- Are AI-assisted PRs smaller or bigger than manual PRs in your repo?

- What is your average batch size for AI-generated changes?

- How long does an AI-generated PR sit in review vs a manual one?

- Do you have a limit on PR size? Is it enforced?

- Can a reviewer understand an AI PR in under 10 minutes?

Second Way (Feedback):

- What percentage of AI code review comments do your devs act on?

- Do you measure false positive rate for your AI reviewer?

- Does your AI reviewer have context about your domain (PQC, finance, healthcare, etc.)?

- Are production alerts connected back to the AI-generated code that caused them?

- Do you retrain or tune your AI prompts based on review outcomes?

Third Way (Learning):

- Can every dev explain the last AI-written function they merged?

- Do you pair juniors with AI, or do juniors code alone with AI?

- Are postmortems done on AI-related incidents?

- Do you have a shared internal doc with AI patterns that worked (and failed)?

- Is AI usage a topic in your retros?

Score 25-30: you are ahead of 95% of teams. Score 15-24: typical. Room to improve. Score under 15: you are the team from this article.

CHALLENGE

Run the assessment on your team this week. Do not cheat — ask each dev separately and average the scores. You will probably be surprised.

In the next article, we tackle the First Way head-on. Why AI pushes you toward bigger batches, how SDD changes the game, and how ATLAS keeps the human in charge. With our QuantumAPI team as the running example — same integration, but this time done right.

→ Article 2: Specs Before Code: Why AI Needs SDD to Keep Batches Small (coming soon)

If this series helps you, consider sponsoring me on GitHub or buying me a coffee.

This is part 1 of 6 in the series “The Three Ways in the AI Era”. Subscribe at blog.victorz.cloud to get the next article.

Loading comments...