The Three Ways in the AI Era -- Part 3

AI Code Review That Doesn't Cry Wolf

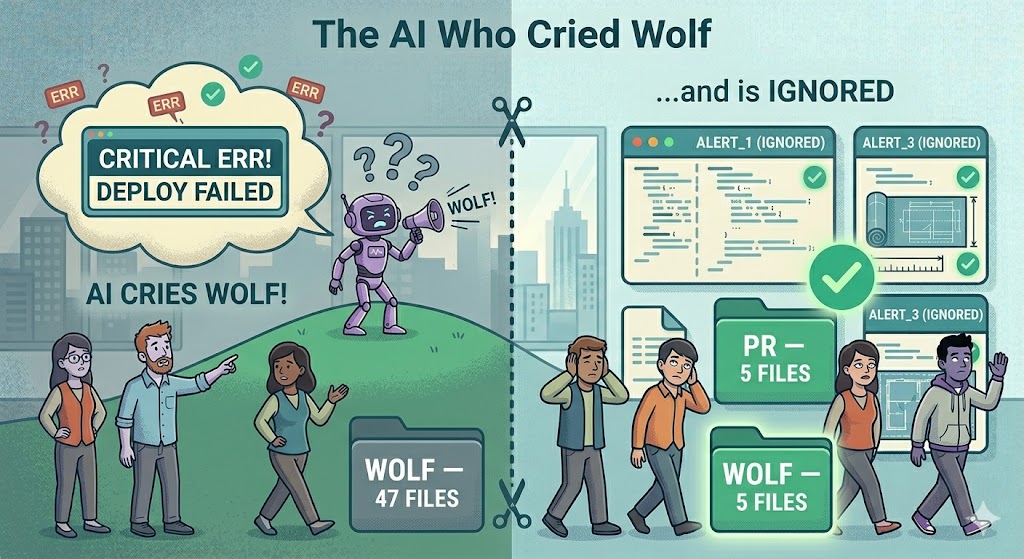

In the last article our QuantumAPI team fixed their batch size. A 47-file monster PR became three small specs of 5 files each. The First Way — Flow — was restored.

Then the next PR hit the AI code review bot. It left 22 comments. 19 were noise. The reviewer glanced at it, scrolled, and closed the tab. Within a week, every dev on the team had learned to ignore the AI reviewer entirely.

That is the Second Way broken. Feedback exists, but nobody reads it. In DevOps terms, this is worse than having no reviewer at all — because the team still feels covered while shipping bugs into production.

PROBLEM

The story of the boy who cried wolf is 2,500 years old and still the best metaphor for bad alerting.

Here is our team’s reviewer history after one month of use:

- 22 comments on a 5-file PR about field encryption

- “Potential SQL injection” on a method using parameterised EF Core queries

- “Use of deprecated algorithm” on

ML-KEM-768, the NIST-standard post-quantum KEM - “Missing null check” on a non-nullable type

- “Hardcoded secret” on the string

"vault-id"— which is a config key name, not a secret value - “Unsafe string concatenation” on a log message

Out of 22 comments, 3 were real issues. The rest were noise.

Gene Kim wrote in the DevOps Handbook that the Second Way — Feedback — must be amplified, fast, and trustworthy. Drop any one of those three and the whole thing collapses. Our team had amplified feedback (22 comments!), it was fast (came in 90 seconds), but it was not trustworthy. So they turned it off in their heads. Feedback = 0.

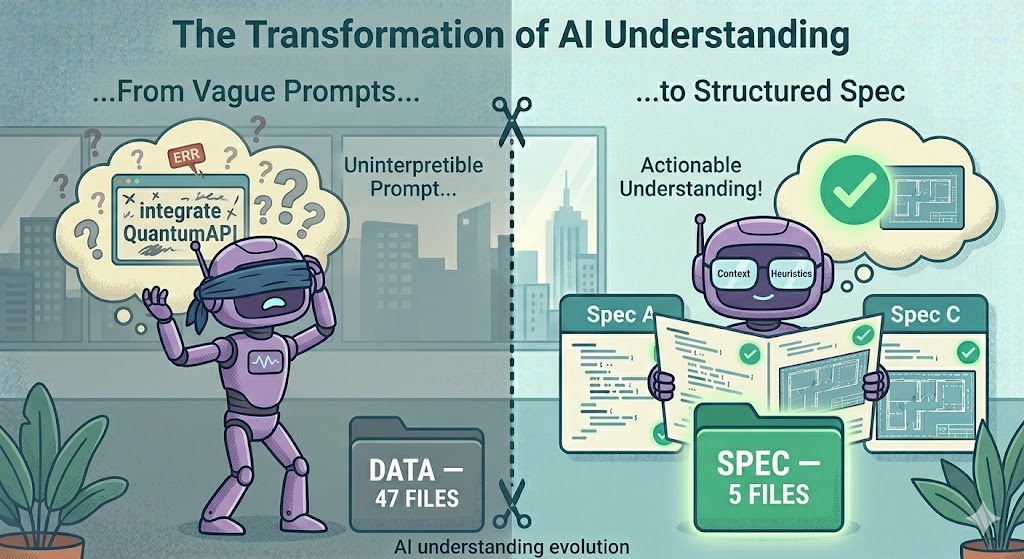

The problem is not that AI reviewers are bad at reading code. The problem is that most AI reviewers are given a generic prompt with no context about the domain, the stack, or the team’s preferences. You would never hire a human reviewer without telling them “we use EF Core, we use PQC, we don’t care about style nits”. But that is exactly how most teams deploy their AI reviewer.

SOLUTION

This is where GOTCHA comes in. GOTCHA is a prompt structure for AI agents. It has 6 sections:

| Letter | Section | Code review example |

|---|---|---|

| G | Goals | Catch real bugs and security issues in PQC-enabled .NET code. Nothing else. |

| O | Orchestration | Read the PR diff → load the linked spec → compare against team style → produce max 5 comments. |

| T | Tools | Mistral Large 2, PR diff, spec.md file, team style guide, QuantumAPI SDK docs. |

| C | Context | This codebase: .NET 10, EF Core 10, QuantumAPI SDK v2.3, ML-KEM-768 for KEMs, ML-DSA-65 for signatures. |

| H | Heuristics | Skip false positives on PQC. No style nits. No “missing null check” on non-nullable types. Flag only issues listed in team-review-rules.md. |

| A | Args | The actual PR diff + the spec path + the PR title. |

The magic is in Context and Heuristics. A generic reviewer has neither. That is why it cries wolf. Once you give it both, it stops.

There is a nice side effect. GOTCHA prompts are portable. The same prompt structure works for code review, code generation, incident triage, or any AI task. The team will reuse this same structure in the next articles.

EXECUTE

Step 1 — The old reviewer

The team was using a generic GitHub Marketplace AI reviewer with a default prompt:

“Review this pull request for bugs, security issues, and code quality problems.”

That is all. No context. No constraints. No domain knowledge. The result was the 22-comment mess we saw above.

They decided to replace it with a custom reviewer using Mistral Large via Scaleway’s Generative APIs — European hosting, cheaper than OpenAI, good enough for code review. And a GOTCHA-structured prompt.

Step 2 — Build the GOTCHA prompt

They saved this as .ai/review-prompt.md in the repo:

# GOTCHA Code Review Prompt — QuantumAPI Team

## G — Goals

You are a senior .NET and security reviewer for a team integrating

QuantumAPI (post-quantum encryption) into a financial services app.

Your only job is to catch:

1. Real bugs that will cause runtime failures

2. Real security issues (not theoretical ones)

3. Spec violations (when the PR adds things outside the linked spec)

Ignore everything else. Produce AT MOST 5 comments per PR.

If there is nothing real to flag, say "No issues found."

## O — Orchestration

For each PR:

1. Read the PR diff (full context, not just the patch)

2. Load the spec file referenced in the PR description

3. Compare the diff against the spec's scope and non-goals

4. Check each changed file against the Heuristics below

5. Rank findings by severity (bug > security > spec-violation)

6. Emit top 5 max, as inline PR comments

## T — Tools

- Mistral Large 2 (your model)

- PR diff (provided as input)

- spec.md (path in PR description)

- team-review-rules.md (loaded as context)

- QuantumAPI SDK v2.3 docs (loaded as context)

## C — Context

Stack: .NET 10, EF Core 10 (code-first), PostgreSQL 16, AKS, Azure DevOps.

Crypto: ML-KEM-768 for KEMs, ML-DSA-65 for signatures, AES-256-GCM

for symmetric. All wrapped via QuantumAPI SDK v2.3 — do NOT suggest

"migrating from ML-KEM-768 to anything else". ML-KEM-768 IS the target.

Tests: xUnit. Auth: JWT via Entra ID. Logging: Serilog + App Insights.

The team has a rule: PR must be ≤ 8 files. Specs live in `specs/`.

## H — Heuristics

- NEVER flag "SQL injection" on EF Core LINQ or parameterised queries

- NEVER flag "deprecated algorithm" on ML-KEM-*, ML-DSA-*, AES-256

- NEVER flag "missing null check" on non-nullable reference types

- NEVER flag "hardcoded secret" on config key names or constants

- NEVER leave style comments (naming, formatting, comments, docs)

- NEVER suggest refactors outside the diff

- ALWAYS verify spec compliance: if the PR touches files not in the

spec's Scope, flag it as a spec-violation

- ALWAYS check CancellationToken propagation in async methods

- ALWAYS check that new secrets come from IConfiguration/KeyVault,

not from hardcoded strings or env var literals

- If a finding is "maybe" rather than "definitely", skip it

## A — Args

- PR diff: <provided at runtime>

- Spec path: <from PR description>

- PR title: <from PR metadata>

- Changed files: <list from diff>50 lines. One file in the repo. Versioned with the code.

Step 3 — Wire it up in Azure DevOps

A small pipeline that runs on every PR. Saved as pipelines/ai-review.yml:

trigger: none

pr:

branches:

include: [ main ]

pool:

vmImage: ubuntu-latest

steps:

- task: UsePythonVersion@0

inputs:

versionSpec: '3.12'

- script: pip install httpx azure-devops

displayName: Install deps

- script: python scripts/ai_review.py

displayName: AI code review

env:

SCW_SECRET_KEY: $(SCW_SECRET_KEY)

SYSTEM_ACCESSTOKEN: $(System.AccessToken)And the Python script that does the actual work (scripts/ai_review.py, shortened):

import os, httpx, subprocess, json

def run(cmd): return subprocess.check_output(cmd, shell=True, text=True)

# 1. Read the PR diff

diff = run("git diff origin/main...HEAD --unified=5")

# 2. Load the GOTCHA prompt

prompt = open(".ai/review-prompt.md").read()

# 3. Read the linked spec (from PR title convention: "[spec-001] ...")

pr_title = os.environ["SYSTEM_PULLREQUEST_PULLREQUESTTITLE"]

spec_id = pr_title.split("]")[0].strip("[ ")

spec = open(f"specs/{spec_id}/spec.md").read()

# 4. Call Mistral Large via Scaleway

r = httpx.post(

"https://api.scaleway.ai/v1/chat/completions",

headers={"Authorization": f"Bearer {os.environ['SCW_SECRET_KEY']}"},

json={

"model": "mistral-large-2411",

"messages": [

{"role": "system", "content": prompt},

{"role": "user", "content": f"SPEC:\n{spec}\n\nDIFF:\n{diff}"},

],

"temperature": 0.1,

},

timeout=120,

)

findings = r.json()["choices"][0]["message"]["content"]

# 5. Post comments via Azure DevOps REST API

post_pr_comment(findings)60 lines total. Not a product. Not a framework. Just enough to replace the 22-comment noise machine.

Step 4 — Same PR, new reviewer

They re-ran the same 5-file encryption PR through the new reviewer. The output:

[AI Review] 4 findings for PR #412

1. src/Infrastructure/QuantumApiFieldEncryptor.cs:18

CancellationToken parameter accepted but not propagated to

client.Vault.EncryptAsync. This will break cancellation.

Severity: bug

2. src/Infrastructure/Data/QuantumEncryptedConverter.cs:31

Value converter does not handle null input. Will throw on

reading a nullable encrypted field. Add null coalesce.

Severity: bug

3. src/Infrastructure/QuantumApiFieldEncryptor.cs:9

QuantumApiOptions.VaultId is read from appsettings.json in

AddQuantumEncryption. Spec requires vault ID via environment

variable. Violates spec constraint.

Severity: spec-violation

4. tests/Infrastructure.Tests/QuantumApiFieldEncryptorTests.cs

Missing integration test covering the round-trip write/read

acceptance criterion from the spec.

Severity: spec-violation4 comments. All actionable. All real. The reviewer fixed the first two in 20 minutes. The third and fourth were genuine spec violations that the human reviewer would probably have missed on a fast review.

| Metric | Old reviewer | GOTCHA reviewer |

|---|---|---|

| Comments per PR | 22 | 4 |

| Signal-to-noise | 14% | 100% |

| Comments acted on | 14% | 100% |

| Reviewer trust | ”ignore it" | "read it first” |

| Runtime | 90s | 70s |

| Monthly cost (Scaleway) | — | ~€12 |

Second Way restored. Feedback is amplified, fast, and trustworthy again.

TEMPLATE

Reusable GOTCHA code review prompt. Save as .ai/review-prompt.md in your repo and fill in the <placeholders>:

# GOTCHA Code Review Prompt — <team name>

## G — Goals

<What must the reviewer catch? What should it ignore?>

## O — Orchestration

<Step-by-step: read diff → load spec → compare → rank → emit>

## T — Tools

<Model, diff, spec, style guide, SDK docs>

## C — Context

<Stack, versions, domain-specific algorithms, team conventions>

## H — Heuristics

- NEVER <domain-specific false positive 1>

- NEVER <domain-specific false positive 2>

- ALWAYS <domain-specific must-check 1>

- ALWAYS <domain-specific must-check 2>

- If a finding is "maybe" rather than "definitely", skip it

## A — Args

- PR diff: <runtime>

- Spec path: <runtime>

- Changed files: <runtime>Rule of thumb: your Heuristics section should start empty. Add a NEVER rule every time you get a false positive. Add an ALWAYS rule every time a real bug slips through. After 4-6 weeks the prompt becomes a living document of your team’s taste.

CHALLENGE

Take your current AI reviewer. Pull the last 10 comments it left. Count how many your devs actually acted on. If it is under 50%, your reviewer is crying wolf. Spend one hour this week writing a GOTCHA prompt for your context. Measure the signal-to-noise next week.

In the next article we tackle the Third Way — Continuous Learning. Our QuantumAPI team now ships small PRs with useful review. But when production broke last month, nobody on the team could explain why ML-KEM-768 and not ML-KEM-1024. Code shipped, knowledge did not. Time to use AI as a tutor, not just a generator.

→ Article 4: Continuous Learning When AI Writes Half Your Code (coming soon)

If this series helps you, consider sponsoring me on GitHub or buying me a coffee.

This is part 3 of 6 in the series “The Three Ways in the AI Era”. Previous: Specs Before Code.

Loading comments...